Hi Inner Circle!

Welcome to this week’s edition.

AI regulation is no longer something far away in policy papers.

On August 2, 2026, the EU AI Act becomes fully applicable and for many companies, this is where AI governance becomes real.

If you build, deploy or integrate AI systems in Europe, it will no longer be enough to say the model works.

You will need to prove that it is safe, explainable, documented, monitored and properly governed.

This edition breaks down what actually changes, which AI systems are affected, what high-risk AI means, and why this date could become one of the biggest turning points for the tech industry in Europe.

Whether you are working in cloud, AI, security, compliance, or product, this is something worth understanding before enforcement starts.

Let’s get into it ~

Before we begin…

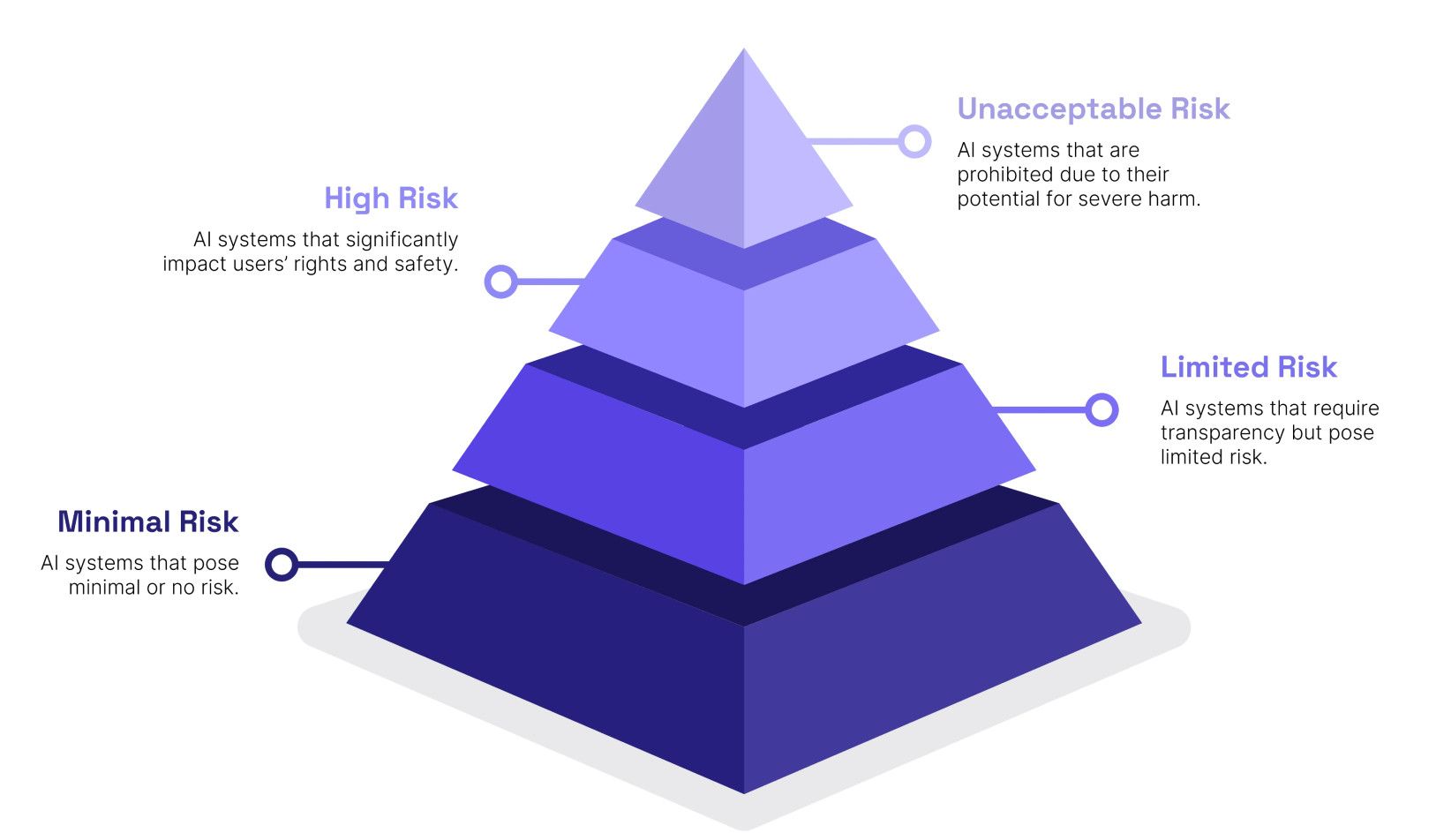

The AI Act does not regulate every AI system in the same way.

The whole regulation is built around one simple idea:

The higher the risk, the stricter the rules.

That means a spam filter is treated very differently from an AI system used in hiring, healthcare, credit scoring, law enforcement or border control.

1. What Happens on August 2, 2026?

On August 2, 2026, the world’s first comprehensive AI regulation becomes fully applicable: the EU AI Act.

For many organizations building or deploying AI systems, this date marks the end of the transition period and the beginning of real regulatory enforcement.

The AI Act introduces a risk-based regulatory framework for artificial intelligence, designed to ensure that AI systems deployed in Europe are safe, transparent and aligned with fundamental rights.

The message from regulators is clear:

If you deploy AI systems in Europe, you must be able to prove they are safe, explainable and governed properly.

For companies building AI products or integrating AI into decision-making systems, this is not just a compliance exercise. It is a structural shift in how AI must be designed, documented and monitored in production.

2. Why the EU Created the AI Act

Artificial intelligence is increasingly used in areas that directly affect people’s lives:

hiring decisions

credit scoring

healthcare diagnostics

education access

law enforcement

border control

However, many modern AI systems behave as opaque decision engines (not transparent), where it is difficult to understand why a model made a specific decision.

This lack of transparency creates serious risks:

discrimination in hiring or lending

biased decision making

unjust denial of public services

violations of fundamental rights

The AI Act aims to ensure that AI systems can be trusted, while still enabling innovation and economic growth across Europe.

→ Imagine a candidate is rejected by an AI hiring tool, but nobody can explain which criteria influenced the decision. That is exactly the type of situation the AI Act wants to address.

3. The Risk-Based Model

Instead of regulating all AI equally, the AI Act separates AI systems into risk categories.

The four risk levels:

Unacceptable risk

High risk

Transparency risk

Minimal risk

This classification decides how much compliance work a company actually has to do.

A recommendation engine and an AI credit scoring system are not treated the same way, because the potential harm is not the same.

4. Unacceptable Risk: AI Systems That Are Banned

Certain AI practices are considered a clear threat to fundamental rights and are therefore prohibited.

These bans already entered into force in February 2025.

Examples include:

AI systems designed for manipulation or deception

exploiting vulnerable individuals

social scoring systems

predictive policing based on personal characteristics

large-scale scraping of internet or CCTV images to build facial recognition databases

emotion recognition in workplaces or schools

biometric categorization inferring protected characteristics

real-time biometric identification in public spaces for law enforcement

These systems cannot legally be deployed in the EU. So basically AI used as Fraud systems.Sign up to volunteer

5. High-Risk AI: The Category Companies Should Watch

This is probably the most important section for you.

High-risk AI systems are allowed, but only under strict obligations.

Examples include AI used in:

recruitment and HR

education and exam scoring

critical infrastructure

healthcare and medical devices

credit scoring

law enforcement

migration and border control

judicial decision support

For these systems, companies need things like:

risk management

high-quality datasets

technical documentation

logging and traceability

human oversight

cybersecurity and robustness

This is where the AI Act becomes operational. Companies cannot just deploy a model and hope for the best. They need governance around the full AI lifecycle.

Scenario

A bank uses AI to support credit scoring. The model may be accurate, but the company still needs to prove how the system works, how bias is managed, who oversees it and how decisions can be challenged.

6. The Real Challenge: AI Governance

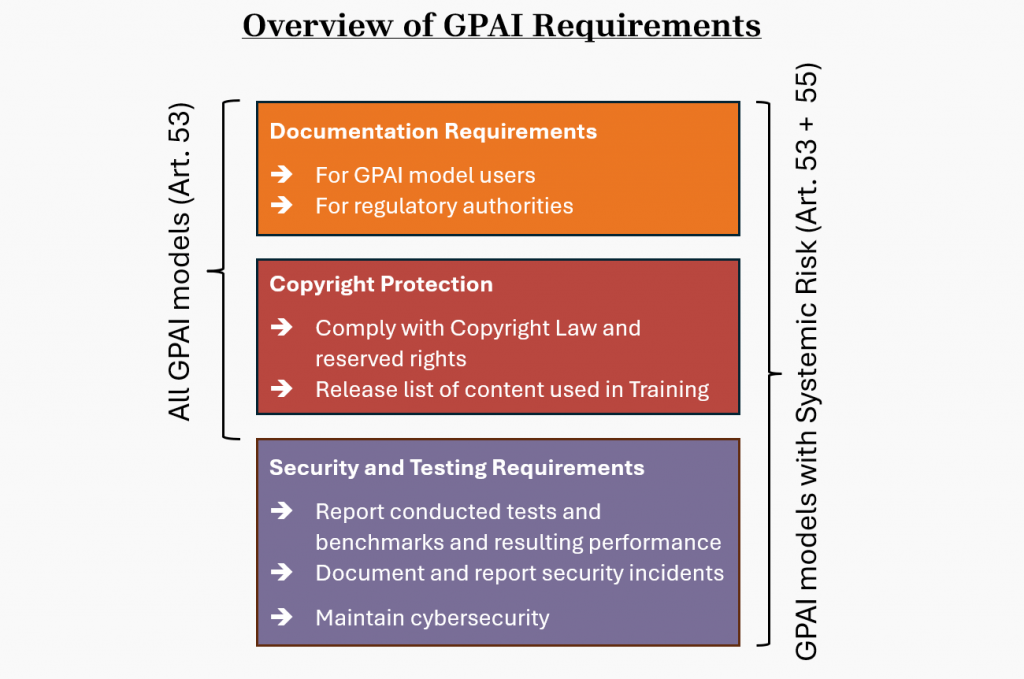

The regulation also addresses General-Purpose AI models, such as large language models.

Providers of these models must comply with obligations related to:

transparency

copyright compliance

safety and security risk assessments

Models that could pose systemic risk due to scale or capabilities must implement additional safeguards.

These rules became applicable in August 2025.

7. General-Purpose AI Models: The LLM Layer

The hardest part of the AI Act is turning AI governance into a real operating model.

Companies need to answer questions like:

What AI systems do we use?

Which risk category do they fall into?

What data was used?

How is bias monitored?

Who is responsible for oversight?

How are incidents reported?

How do we prove the model is safe?

How do we explain AI-assisted decisions?

This is where many companies will struggle. They may have AI tools in production, but no proper inventory, no clear ownership, no logging and no audit trail.

Scenario

A company has 30 AI tools across HR, sales, support, legal and finance. Nobody owns the full AI inventory. That becomes a governance problem before it becomes a technical problem.

9. Fines and Enforcement

Non-compliance can lead to serious penalties.

The highest fines can reach:

€35 million

or 7% of global annual turnover → whichever is higher

The exact penalty depends on the type of violation. The highest level is connected to prohibited AI practices.

This makes the AI Act a real thing. Because money talks…

10. What Companies Should Start Doing Now

Companies should start with:

AI system inventory

risk classification

documentation templates

human oversight design

logging and monitoring

bias and robustness testing

incident reporting process

vendor and model provider review

AI governance ownership

If a company only starts preparing when enforcement begins, it may discover too late that nobody can explain which AI systems are deployed, which data they use, and who is responsible for them.

That’s it for this week.

The EU AI Act is about proving that AI systems can be trusted.

For companies operating in Europe, the key takeaway is simple:

AI systems must now be explainable, auditable and governed.

The era of deploying black-box AI without accountability is coming to an end.

See you in the next one.

- Rami